1.0 Senior Thesis, BSc Physics (1981-1983)

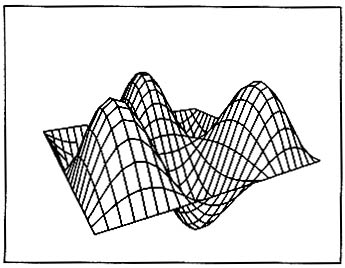

One of the vibrating membrane images from

my Physics Senior Project (1981-1983)

Between 1981 and 1983 I modeled vibrating strings and square membranes for my Senior Thesis toward my BS degree in Physics (Cal Poly San Luis Obispo). I simulated the motion of the strings and membranes on a mainframe computer (CDC Cyber 174) using D'Alembert's solution to the wave equation as well as a Fourier Solution. I used a primitive storage scope/graphics terminal to display images of motion a frame at a time.

I rigged up a super-8 film camera with B/W film to take images a frame at a time. I build this funny gizmo box to trigger the camera in animation mode. I used the bell (ctrl-g) and a sound actuated switch to trigger the camera. I also brewed up the code to do the 3-D display/hidden line removal for the vibrating membrane. I processed all of the film by hand in a movie development tank. Around 1988 I noticed that the emulsion on the film was becoming damaged, so I transfered the movies to VHS video tape. In March 2002 I digitized the video tape.

I was really interested in using computer graphics to display the motion vibrating strings and membranes. It never occurred to me at the time that I could have written sound files from this code.

Here is my original Senior Project, written between 1981 and 1984:

Physics Senior Thesis (pdf, 4.4 mb)

Physics Senior Thesis (pdf, 4.4 mb)

Here are the movies that I made:

| Movie | youTube |

D’Alembert’s Solution Illustrated D’Alembert’s Solution Illustrated |

6.5 mb |

A Plucked String A Plucked String |

5.6 mb |

A Plucked

Square Membrane A Plucked

Square Membrane |

3.3 mb |

A Plucked

Square Membrane Mode A Plucked

Square Membrane Mode |

5.0 mb |

A Plucked

Square Membrane Mode A Plucked

Square Membrane Mode |

4.8 mb |

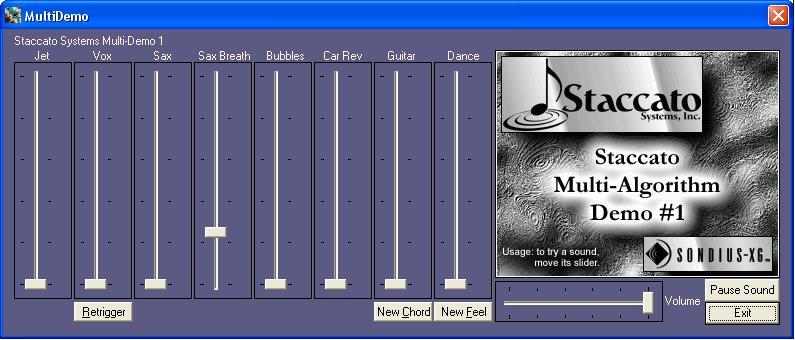

2.0 Stanford/CCRMA, Sondius Project (1994-1996)

From 1983-1994, I spent 11 years working as a programmer and manager in the EDA business (CAD software for designing chips). I yearned to return to working with audio. From 1994-1996 I was very fortunate to have an opportunity to work at Stanford/CCRMA as a paid researcher. I was a member of the "Sondius" team that developed technology around Stanford/CCRMA's Physical Modeling Patents (Sondius-XG). This was perhaps the best working experience that I have had to date. I felt that I had come full circle by working on things that had interested me 15 years earlier.

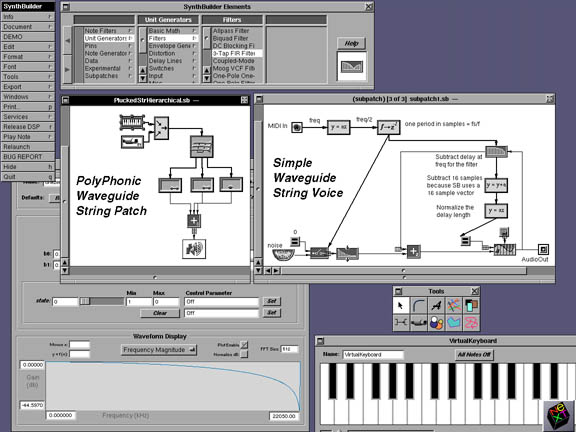

Screen Shot of SynthBuilder running on an X86 based

NeXT machine(Click to see a larger image)

At Stanford/CCRMA, I developed portions of SynthBuilder (which was written primarly by Nick Porcaro). SynthBuilder was written in Objective-C on a NeXT machine. I designed the hierarchy algorthms, the loop ordering algorithm, the pin algorithms, the authorization code system, and worked on many of the inspectors. While at Stanford, I co-authored a patent, and co-authored seven papers (see below) SynthBuilder was awarded the 1997 Grand Prize in the Bourges International Music Software Competition.

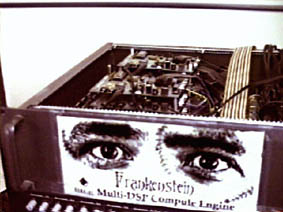

The Frankenstein 8-56k DSP engine build by

Bill Putnam and Tim Stilson

(Click to see a larger Image)

The complete DSP prototyping system.

SynthBuilder and the Frankenstein

Box. (Click to see a larger Image)

I also worked on developing

a number of Physical Models. These models were developed on a NeXT machine

using SynthBuilder (screenshot-1 gif, 68kb,

screenshot-2 gif, 55kb) and the NeXT

MusicKit running on Motorola 56k DSPs. We had 3 DSP platforms. The original NeXT machine had an onboard

25Mhz 56k. Bill

Putnam and Tim Stilson

also designed a DSP farm known as the Frankenstein box (screenshot-3 gif, 154kb, screenshot-4 gif, 177kb) that had

8 Motorola 56k EVMs over-clocked to 80 MHz connected to a P5 NeXT machine

via an ISA interface. We also had

a single card EVM integrated onto an ISA card , known as a “Cocktail Frank”

(Tim Stilson and I hand built these). Here are some sound samples, and a brief

explanation of each model.

Waveguide Flute Model (mp3, 196 KB)

This model was based on Perry Cook's Flute model. I added a lookup table to calibrate the waveguide loop filter. I used my tin flute and a spectrum analyzer to find coefficients for the loop filter (as a function of pitch) that matched the real tin flute. This made it possible for the model to sound similar to my tin flute.

This recording was made in August 1996 on the Frankenstein DSP box. In this recording, I’m playing the flute model using a 6-channel guitar MIDI controller and a breath control head set (for overblowing the flute). I really enjoyed playing this model. Overblowing the model was was very expressive.

Waveguide

Guitar Model, Different Pickups (mp3, 386 kb)

Waveguide

Guitar Model, Distortion and Amplifier Feedback (mp3, 631 kb)

Waveguide Guitar Model, Wah-wah (mp3,

295 kb)

Waveguide Guitar Model, Jazz Guitar (ES-175)

(mp3, 436 kb)

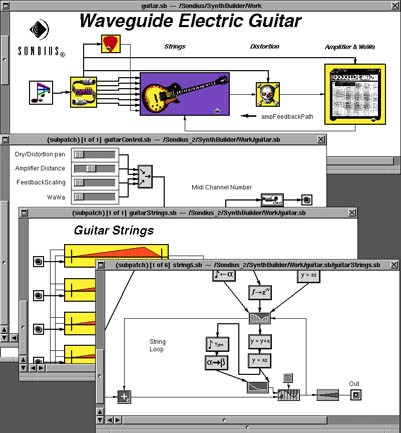

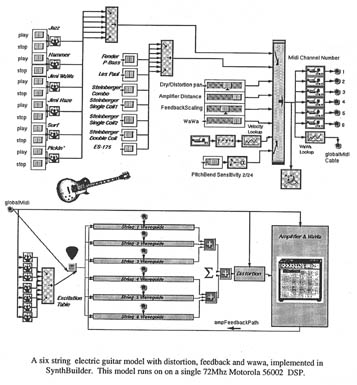

The Waveguide Electric Guitar Model

(Click to see a larger image)

The

Waveguide Electric Guitar Model

(Click to see a larger image)

This model was based on Julius Smith's Guitar models, as well as a distortion model that was done by C. R. Sullivan (CMJ fall 1990). I added many new things to this model.

· I designed it to be a 6 string model running on 6 MIDI channels so that my guitar MIDI controller could control it polyphonically. It responded to polyphonic PitchBend.

· I added the amplifier feedback model with a distance control so that the "sweet spot" for feedback could be controlled by MIDI. I usually mapped this to a foot pedal so that I could change the amp distance while playing the model.

· I added a waw-wah pedal. Tim Stilson and Bill Putnam improved this by collecting some real data from my old Cry Baby waw-wah. They built an improved version of the wah-waw pedal.

· This model had selector switches that allowed you to change the model to sound like several different classic electric guitars. It was in part based on a pick model using data from real guitars. I collected data from my Steinberger, Les Paul and ES175. I found that I could use the first period of the real instruments as the excitation (the pick)! In addition created lookup tables for the filter coefficients so that the decay time of the strings would match the real instruments.

· This model led us to invent the patented DelayAI delay line (patent, paper pdf, 671kb). I found that high notes would decay too quickly when I used a linearly interpolating delay line. This is because the linear interpolation of the delayline itself acts as a filter. The DelayAI delay line combines the lossless properties of an allpass filter, and ability to change the delay length without clicks (as with the interpolating delay line).

This recording was made in August 1996 on the Frankenstein DSP box. The guitar model is being played by me using a 6 channel guitar MIDI controller a MIDI pedal for wah-wah and a MIDI pedal for amplifier distance. This model was eventually porting to the Pentium/SynthCore during the early days of Staccato. I used to LOVE playing this model. It was really like playing a super hot electric guitar. Sometimes when I was working at Staccato late at night, I would fire up this model and play the Neil Young's theme from the movie "Dead Man". Totally Haunting. I believe that it will be years before another guitar player will be able to have access to a model like this.

Harpsichord Model (mp3, 341 kb)

I developed the harpsichord model from scratch. It had a pair of slightly detuned coupled string models to model the pair of strings found in a real harpsichord. Later Scott Van Duyne contributed the excitation model. This model used Julius Smiths' commuted Synthesis to model the soundboard.

Tibetan Bell Model (mp3, 509 kb)

Wind Chime Model (mp3, 422 kb)

This model is based on Scott Van Duyne's Coupled Mode Synthesis (CMS). It was calibrated using Julius Smith and Scott Van Duyne's Calibration MatLab code. What's really great about CMS is that if you have a real recording of a percussive instrument you can get the model to sound fairly similar. Basically the real recording can be analyzed into partials, each with a unique decay rate (coupling between partials is ignored). The model can be calibrated with a simplified list of partials and their decay rate. Also the excitation "hammer" model responded to MIDI velocity so that you could hit the bells hard or soft. If you hit the bell a second time, the model already contains energy from the initial hit, allowing you to "rearticulate" the model.

The data used to calibrate these models came from a bell that I brought back from Tibet, and from a tenor wind chime in my back yard.

RECORDINGS

All recordings are Synthesized Physical Models played from a Roland guitar controller.

Here are all the Sondius Recordings.

| 01 Sondius Demo | 2:49 |

| 02 Waveguide Flute | 0:18 |

| 03 Taiko Percussion | 0:23 |

| 04 Percussion Using CMS | 1:03 |

| 05 Tibetan Bell Using CMS | 0:48 |

| 06 Windchimes Using CMS | 0:43 |

| 07 Tubular Bells Using CMS | 1:13 |

| 08 Under The Sea, All Physical Model | 0:27 |

| 09 Israel - Piano, Bass | 3:21 |

| 10 Harpsichord | 2:28 |

| 11 Guitar Model | 3:38 |

| 12 Acoustic Bass | 0:58 |

| 13 Tek_No | 1:47 |

| 14 James - Rhodes, Bass, Percussion | 4:00 |

We wrote a number of papers during this research period, won an award for SynthBuilder, and I was co-author of one patent.

PATENTS

5,742,532: April, 21, 1998. Van Duyne; Scott A. (Stanford, CA), Jaffe; David A. (Berkeley, CA), Scandalis; Gregory P. (Mountain View, CA), Stilson; Timothy S. (Mountain View, CA) "System and Method for Generating Fractional Length Delay Lines in a Digital Signal Processing System."

6,959,094: Oct 25, 2005. Cascone; Kim (Pacifica, CA), Petkevich; Daniel T. (San Jose, CA), Scandalis; Gregory P. (Mountain View, CA), Stilson; Timothy S. (Mountain View, CA), Taylor; Kord F. (San Jose, CA), Van Duyne; Scott A. (Palo Alto, CA) "Apparatus and methods for synthesis of internal combustion engine vehicle sounds"

PAPERS

“SynthBuilder: A Graphical Rapid-Prototyping Tool for the Development of Music Synthesis and Effects Patches on Multiple Platforms” (pdf, 1.5 mb) , Nick Porcaro, David Jaffe, Pat Scandalis, Julius Smith, Tim Stilson, and Scott Van Duyne, Computer Music Journal, Volume 22, Number 2, pp. 35 - 43, MIT Press, 1998.

“A Lossless, Click-Free, Pitchbend-able Delay Line Loop Interpolation Scheme” (pdf, 671 kb), Scott A. Van Duyne, David A. Jaffe, Gregory Pat Scandalis, Timothy S. Stilson, 1997 International Computer Music Conference, Greece, 1997.

“SynthBuilder and Frankenstein” (pdf 305 kb), N. Porcaro, W. Putnam, P. Scandalis, T Stilson, D. Jaffe, and J. O. Smith, S. Van Duyne, ICAD 1996.

“Work in Progress, SynthScript and SynthServer” (pdf 8 kb), P. Scandalis, David Jaffe, CCRMA Affiliates Presentation 1996

“SynthBuilder: A Rapid-Prototyping Tool for Sound Synthesis and Audio” (pdf 14kb), Nick Porcaro, Pat Scandalis, Julius Smith, 1996 Presented at Berkeley EE seminar.

“Using SynthBuilder for the Creation of Physical Models” (pdf 283 kb), N. Porcaro, P. Scandalis, D. Jaffe, and J. O. Smith, 1996 International Computer Music Conference, Hong Kong. 1996.

"SynthBuilder Demonstration, A Graphical Real-Time Synthesis, Processing and Performance System" (pdf 415 kb) Nick Porcaro, Pat Scandalis, Julius Smith, David Jaffe and Tim Stilson, 1995 International Computer Music Conference, Banff. 1995.

Awards

SynthBuilder wins Grand Prize in Second International Music Software Competition (pdf 10 kb) Bourges, France, 1997

3.0 Staccato Systems (1997-2000)

Early Staccato Logo

Later Staccato Logo

In late 1996, (at Stanford)

we had been working on a portable host based implementation of something like

the NeXT MusicKit. This was known

as SynthServer/SynthScript. SynthServer

was a portable Synthesis Engine. SynthScript was an interchange format (inspired

by postscript) to describe Synthesis Algorithms (paper, early spec pdf

cache 325 kb ). David Jaffe implemented SynthServer and I implemented

SynthScript. By Nov. 1996, SynthBuilder was able to send SynthScript Algorithms

and real time updates to SynthServer via a network connection.

In early 1997, the Sondius Team from Stanford (David Jaffe, Joe Kopenick, Nick Porcaro, Pat Scandalis, Julius Smith, Tim Stilson, Scott Van Duyne) formed Staccato Systems.

The SynthServer/SynthScript work was brought to Staccato, and evolved into SynthCore. SynthBuilder was ported to windows using Apple Computer’s “Yellowbox” technology. Here is a video of SynthBuilder/SynthCore as it was around 2000. Note that I made this video in March 2012 using my legacy Win98 machine with yellowbox installed. As far as I know, this is the only extant copy of SynthBuilder for Windows.

SynthCore was both a DLS (Down Loadable Sounds)/General Midi Synthesizer and a programmable Algorithmic Synthesizer that could be programmed via SynthScript Down Loadable Algorithms (DLAs). SynthCore was offered as a product in 2 forms.

· Packaged as a “midi driver”, SynthCore could replace the wavetable chip on a sound card for a host based audio solutio (SynthCore-OEM)

· Packaged as a DLL/COM service, SynthCore could be integrated into game titles so that games could make use of interactive audio algorithms (race car, space guns, car crashes) (SynthCore-SDK)

Here is a zip file of a demo of the that Staccato did. This was mostly the work of Tim Stilson and Scott Van Duyne. This is a small program that uses the SynthCore DLL to load a collection of DLAs (found in an encrypted .dla file). Click on the picture to download the zip file.

My role at Staccato was both as a programmer and I managed the SynthCore Team. As a programmer I developed SynthScript (pdf cache 325 kb) and its parser, the DLAManager (Down Loadable Algorithm Manager) ( pdf cache), much of the public API, some of the documentation, and most of the integration work to productize these two products. David Jaffe wrote the core Synthesis Engine and the XG-lite General Midi Synthesizer. David Van Brink wrote the DLS-1 Synthesizer. Danny Petkevich optimized the code for SIMD instruction sets, and developed the reverb and chorus algorithms.

I did very little modeling work at Staccato. However, Danny Petkevich and I developed a technique that we called “Compositing”. In May 1999 I watched a sound designer (Nick Peck) create a complex gun sound for a game. Watching how Nick worked was the inspiration for “Compositing”. He wanted to start from a collection of small sound samples and create a set of 10 or so “composite” gun samples that could be used in a game. He wanted these sounds to be similar, but different so that the gun sound was not repetitive. For example, he had 5 fire samples, 5 recoil samples and 5 hit samples. I saw that in Pro-tools he set up these sounds as 5 tracks in 3 columns. When he rendered a final gun sound he mixed and match samples of each column. He would sometimes trigger the sounds in random and statistical clusters.

Starting in June 1999 Danny implemented these ideas as a set of “Compositing” sound algorithms for race car games. Danny implemented things like tire skids, crashes, thuds, scrapes. The algorithms used SynthCore’s DLS-1 Synthesizer a custom sample set, and an event-processing algorithm to translate game collision events into random and statistical clusters of triggered samples. I believe that this algorithm was used in Electronic Art’s “Nascar 2000”.

As a footnote, SynthCore-OEM is now a part of the Analog Devices "SoundMAX" product. It can be found on about 60% of shipping PC's (See http://www.soundmax.com/OEMPartners/index.html). Examples include the currently shipping HP Pavilion (HP502n), Compaq (1510, 705) and IBM (NetVista A and M Series) systems as well as the Intel® D845PEBT2 Desktop Motherboard. I co-developed SynthCore-OEM, as well as managed the development team. " SynthCore-SKD can be found in a number of games, including Electronic Art's NASCAR-Revolution and NASCAR-2000. I co-developed SynthCore-SDK, as well as managed the development team.

4.0 TuneTo.com (2000)

At TuneTo.com, I was the VP of OEM software. TuneTo’s work eventually evolved to be what is today known as Rhapsody. I productized TuneTo.com’s Internet Radio SDK. The work consisted of performance and memory tuning, writing all documentation, developing whitebox tests, setting up automated build-and-test systems, finalizing SDK packaging. I also co-ported a predecessor of Rhapsody (known as Aladdin, written by Sylvain Rebaud) to an early Windows CE mobile platform. I tested this by driving up and down highway 101 and playing music from the mobile device. As far as I know, this was the first example of a mobile music service.

5.0 Jarrah Systems (2001-2003)

From 2001-2003 I did consulting (as Jarrah Systems) for various digital media companies. I also worked on my own ideas. I build an early Media Center PC and developed business plans for a Digital Media Appliance with a unique cabling interface. I also developed a prototype for a Time Shift Radio device based on the D-link DSB-R100 which used acoustic signatures to identify content recorded from terrestrial radio.

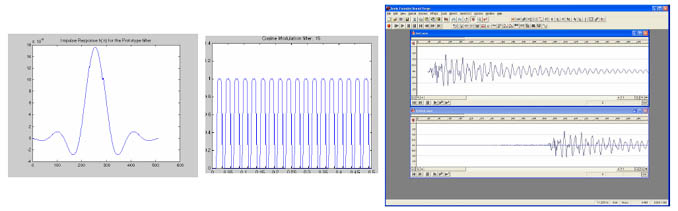

6.0 MP3 Layer-1 encoder/decoder in Matlab (2004)

In 2004 I took the "Professional Sequence in DSP" from University of California, Berkeley (2004). I Developed an MP3 Layer-1 Encoder/Decoder as a class project. Here is the writeup. You can also download all the Matlab code (zip file)

7.0 Liquid Digital Media (2003-2012)

From 2003-2012 I worked for Liquid Digital Media (formerly Liquid Audio). Initially my role at Liquid was Technologist. In 2005 I became the VP of Technology and from 2006 to 2012 I directly ran Liquid.

Liquid was the first legal music download service. Liquid also powered all of Walmart.com's digital music properties end-to-end. This included several different "a la carte" music stores with and without DRM, in-store and online custom CDs, various code-based promo systems, and a video-on-demand (VOD) system

Under my aegis we completely re-implemented all of our systems front-to-back and launched the first DRM-free MP3 download service (March 2008) several months before Apple and Amazon.

In 2009/2010 we developed a

next generation "Music In The Cloud/Digital Locker". This was several years

before Amazon, Apple and Google. Here is a video of that system.